HumanTOMATO: Text-aligned Whole-body Motion Generation

HumanTOMATO: Text-aligned Whole-body Motion Generation

Shunlin Lu🍅 2,

3,

Ling-Hao Chen🍅 1, 2,

Ailing Zeng2,

Jing Lin1, 2,

Ruimao Zhang3,

Lei Zhang2,

and

Heung-Yeung Shum1, 2

🍅Co-first author. Listing order is random.

1Tsinghua University, 2International Digital Economy Academy (IDEA),

3School of Data Science, Shenzhen Research Institute of Big Data, CUHK (SZ)

Abstract

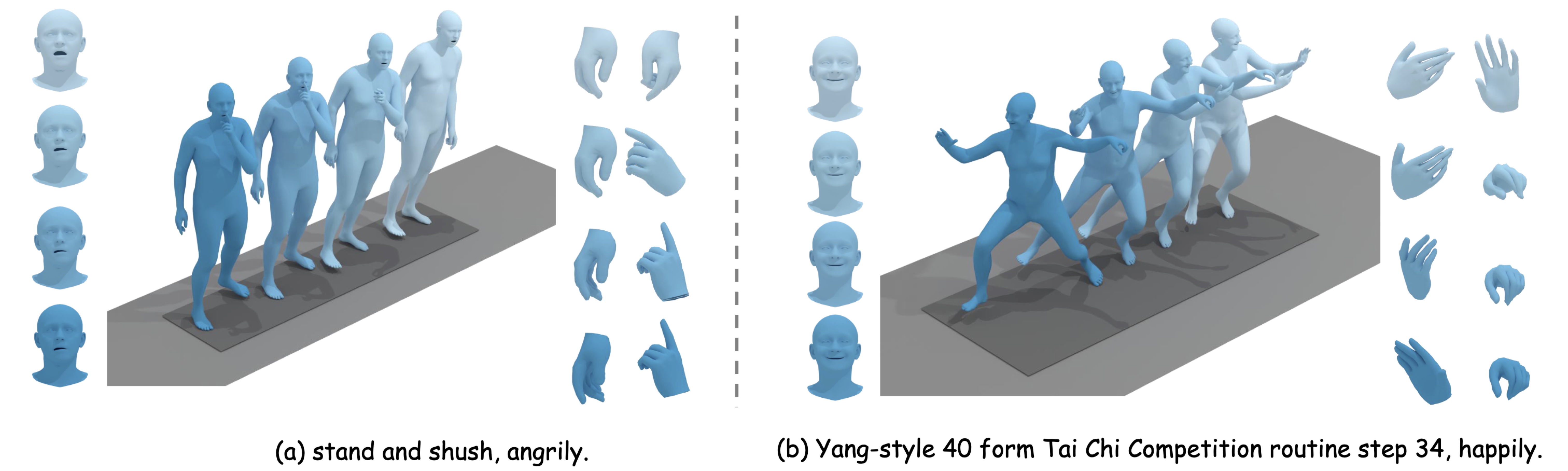

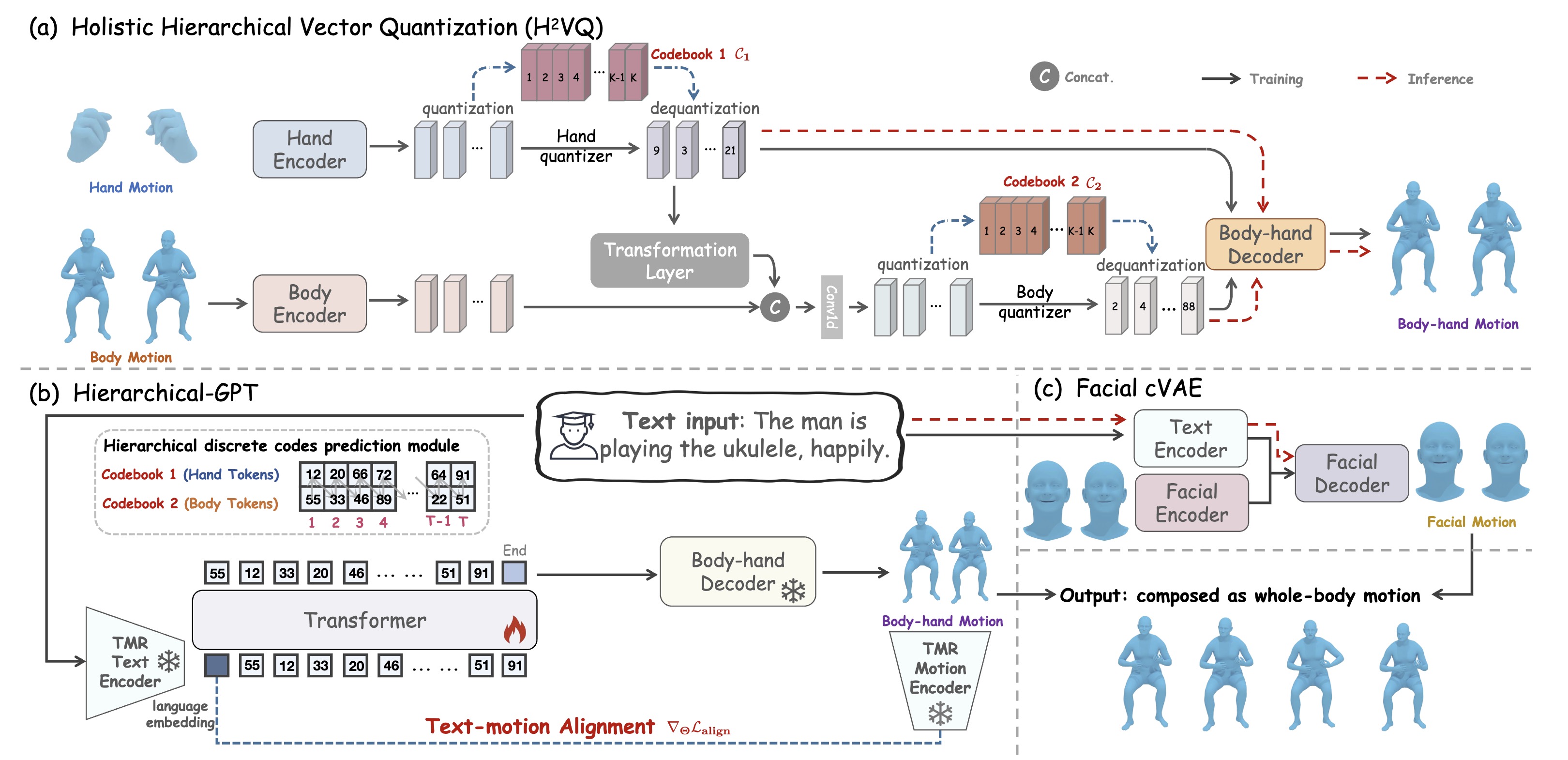

This work targets a novel text-driven whole-body motion generation task, which takes a given textual description as input and aims at generating high-quality, diverse, and coherent facial expressions, hand gestures, and body motions simultaneously. Previous works on text-driven motion generation tasks mainly have two limitations: they ignore the key role of fine-grained hand and face controlling in vivid whole-body motion generation, and lack a good alignment between text and motion. To address such limitations, we propose a Text-aligned whOle-body Motion generATiOn framework, named HumanTOMATO, which is the first attempt to our knowledge towards applicable holistic motion generation in this research area. To tackle this challenging task, our solution includes two key designs: (1) a Holistic Hierarchical VQ-VAE (aka H²VQ) and a Hierarchical-GPT for fine-grained body and hand motion reconstruction and generation with two structured codebooks; and (2) a pre-trained text-motion-alignment model to help generated motion align with the input textual description explicitly. Comprehensive experiments verify that our model has significant advantages in both the quality of generated motions and their alignment with text.Demo Video

Highlight Whole-body Motions

System Overview

Comparing with GT

Comparing with Baselines

How does Motion-aware language prior help the motion generation?

Examples of Motion Reconstruction

Citation

@article{humantomato,

title={HumanTOMATO: Text-aligned Whole-body Motion Generation},

author={Lu, Shunlin and Chen, Ling-Hao and Zeng, Ailing and Lin, Jing and Zhang, Ruimao and Zhang, Lei and Shum, Heung-Yeung},

journal={arxiv:2310.12978},

year={2023}

}The website template was adapted from HumanMAC Project.